Before Your Firm Uses AI-Generated Imagery, Read This

Before Your Firm Uses AI-Generated Imagery, Read This

Artificial intelligence is changing how professional services firms think about visual content. AI-generated headshots, B-roll, and marketing imagery promise to cut costs and speed up production. For busy marketing teams managing global firms, the appeal is obvious.

But before your firm makes that switch, there’s a conversation you need to have with your legal team first.

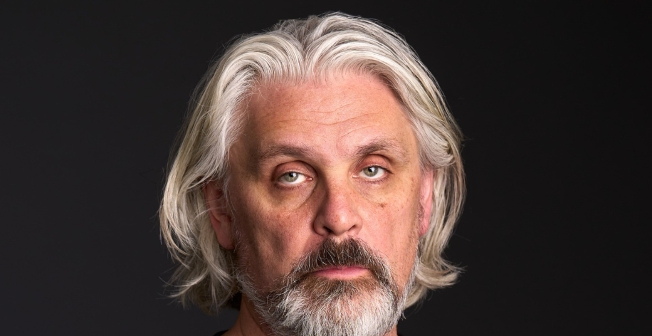

We sat down with Daliah Saper, founder and principal attorney at Saper Law, a Chicago-based firm specializing in entertainment, advertising, and media law. Saper has been navigating emerging technology law since the early days of social media, and today she’s tracking AI litigation as closely as anyone in the country. What she shared should give any professional services firm pause.

The Legal Landscape Is Unsettled (by Design)

Saper draws a direct parallel between where AI law stands today and where social media law stood twenty years ago. “Every day we’re seeing this struggle to balance the need for laws that are permissive enough to encourage innovation, but also laws that curb any misuse of that technology,” she says.

That uncertainty isn’t temporary noise. It’s the operating environment, and firms that move fast with AI-generated content are making decisions inside a legal framework that doesn’t yet exist.

AI Imagery May Carry Copyright Liability

The most immediate risk Saper flags is copyright infringement. AI tools are trained on existing content, and the rights to that content are largely unresolved.

“Creators are absolutely outraged that some of these large language models have trained on, or are directly creating output that is a derivative of, their work,” she explains. Class action lawsuits involving authors, photographers, and media companies are already moving through the courts.

For professional services firms, this creates direct exposure. If AI-generated imagery is found to be derived from copyrighted source material, the firm that published it could be liable, regardless of whether they built the AI tool themselves.

Saper is direct about who gets targeted. “Until you have subscription models where the training material is licensed, we’re going to see reticence by companies with deep pockets that are targets for lawsuits.” Large law firms and professional services companies are exactly that.

The Quality Isn’t There Yet Either

Setting aside legal risk entirely, the output still isn’t good enough for professional use. Saper is candid about this.

“We’re in this weird stage where an AI looks like AI,” she says. At the senior level, where the credibility of individual professionals is on the line, that distinction matters. AI-generated headshots might technically do the job, she notes, but “it’s missing some things that you probably want there”, the same way an AI-drafted contract can look complete while quietly omitting something critical.

For firms whose attorneys and partners are the product, a headshot that reads as artificial is worse than no headshot at all.

The B-Roll Problem

This risk is particularly relevant for corporate video. Many firms are exploring AI-generated B-roll as a cost-saving alternative, and on the surface, the economics make sense.

But Saper cautions that cost savings don’t offset legal exposure. The firms pushing back on AI B-roll aren’t doing so because it looks inauthentic. “Their nervousness is not a function of consumer backlash. It’s a function of the ambiguity as it relates to legal liability.” The concern is that the footage may be trained on content belonging to someone else.

Imagine investing in a corporate video production, publishing it widely, and then having to pull it down months later over a copyright claim. That’s the scenario Saper is advising firms to think about now.

Disclosure Requirements Are Coming

Beyond copyright, Saper points to another development worth watching: mandatory AI disclosure. Drawing a parallel to FTC endorsement rules, the regulatory direction is toward transparency.

“Maybe we’re at a place where AI usage has to be prominently disclosed,” she says, “so consumers know what they’re dealing with.” For professional services firms whose brand is built on trust, mandatory disclaimers on marketing imagery isn’t just a legal issue, it’s a brand one.

Authenticity Still Has a Measurable Business Case

Between 55 and 75 percent of visitors to law firm websites view attorney bios, making them among the most trafficked pages on the site. What they find there shapes the decision of whether to make contact.

“People are driven by and want authentic content,” Saper says. “We want to consume information and content that at least feels real and not overly produced.” A professional portrait that communicates genuine competence and approachability is one of the highest-leverage brand touchpoints a firm has, and right now, AI can’t reliably deliver that.

The Prudent Move Right Now

Saper’s advice is consistent: don’t fear the technology, but don’t get ahead of the legal reality.

“If you are in a position where AI usage exposes you to litigation or questions about ownership, it’s pretty crucial to consult with an attorney and get a pair of more seasoned, experienced eyes on whatever it is you’re doing.”

For visual content specifically, the safest path is working with professional photographers who deliver work with clear ownership, no copyright ambiguity, and no disclosure risk. The legal framework around AI imagery will eventually catch up, but until it does, the firms that move carefully are the ones that won’t be cleaning up a mess later.

Ready to strengthen your firm’s first impression? At Gittings Global, we help law firms create professional photography and video that builds trust at scale. Whether you need headshots across multiple offices or bio videos that showcase your attorneys’ expertise, we ensure visual consistency that supports your business development goals.

Contact us to discuss your firm’s needs.

Leave a Reply